AI Sprint Planning and Predictive Risk: The Future of SaaS Delivery

22 Feb 2026

Software Development

Velocity is no longer the hard part. Predictability is.

AI coding assistants have made it easier to scaffold features, refactor, and generate tests quickly. In a controlled study published by GitHub, developers using Copilot finished a coding task 55% faster than developers who did not.

That is the twist: output goes up, but delivery volatility does not automatically go down.

If anything, many teams feel a new kind of sprint chaos:

- Estimates swing harder than they used to.

- Review queues and QA queues become the real calendar.

- “We will know in a few days” becomes the default status update.

This is where AI sprint planning matters, not as a buzzword, but as a practical shift from “what can we commit to?” toward “what is the probability we finish what we commit to?”

What AI sprint planning actually is

AI sprint planning uses historical delivery data to forecast capacity, map dependencies, and predict sprint risk before work starts.

Traditional sprint planning tends to rely on:

- story points and team velocity

- static assumptions about availability

- gut feel on dependency risk

AI sprint planning treats sprints as a scheduling and coordination system. It asks:

- What has historically caused spillover for us?

- Where is our workload balancing failing (dev vs review vs QA)?

- Which dependencies are likely to stall and when?

- What is the probability this sprint hits scope, not just “tries”?

This “probability-first” framing fits the reality of modern software delivery: the constraint is regularly coordination, approvals, and shared environments, not the act of writing code. Playbook is positioned around this reality in its software development offering: sprint and backlog management, multi-team dependency tracking, integrated QA and bug tracking, capacity forecasting, and integrations that tie work to version control and CI/CD signals.

Why sprint scheduling got harder even as coding got faster

SaaS delivery is no longer a clean chain of “dev then test then ship.” A single feature can fan out across backend services, frontend work, APIs, infrastructure, security review, QA environments, and release gating. Playbook’s own product messaging emphasizes dependencies, critical paths, approvals, and capacity as first-class scheduling concerns across complex projects.

At the same time, AI changes sizing in unpredictable ways:

- Some work shrinks drastically (boilerplate, repetitive changes, test scaffolding).

- Some work expands unexpectedly (integration conflicts, architectural drift, security constraints, tech-debt exposure).

So the planning problem shifts. It is less “how many points can we do?” and more “where are we likely to get stuck?”

The five failure points that break sprint predictability

Below are five failure modes that show up repeatedly in sprint scheduling, release scheduling, and timeline optimization. For each one, there is a concrete way to model it, a way to mitigate it, and a way to operationalize it inside an execution platform like Playbook.

Capacity miscalculation (resource allocation that assumes equality)

Most teams still plan as if each engineer contributes evenly. Real capacity varies because:

- senior engineers carry architecture and reviews

- support tickets and incident response interrupt flow

- meetings and context switching cut usable capacity

How to model it:

- look at throughput by person and by work type (feature vs bug vs review)

- include interrupt load as a “capacity tax” rather than pretending it is free

- treat capacity as dynamic week-to-week, not a static sprint constant

How Playbook supports the workflow:

- capacity and resource forecasting is part of the software development feature set, and cross-project reporting is positioned around predictability, bottlenecks, and delivery timelines.

Code review becomes the hidden critical path (timeline optimization bottleneck)

AI can multiply code output, but it does not automatically multiply reviewer bandwidth.

What tends to happen:

- pull requests accumulate mid-sprint

- review latency pushes integration late

- “done” work is not actually merged, tested, and shippable

How to model it:

- track PR aging and time-to-first-review as a queue, not an afterthought

- measure review load per reviewer (how many active PRs are waiting on them)

- model review SLAs as a time-bound dependency, like an inspection gate

How Playbook supports the workflow:

- Playbook positions real-time notifications, dependency tracking, and version control linkage (commits, pull requests, pipelines) as part of its R&D execution approach for software teams.

QA and staging overload (workload balancing across dev and validation)

QA is often treated as “after dev,” but in practice QA capacity and environment availability behave like scarce resources.

How to model it:

- treat QA as a scheduled resource with assigned capacity

- forecast regression load based on open bug trends and recent change volume

- include environment availability (staging, test data refresh, release trains) as constraints

How Playbook supports the workflow:

- integrated QA workflows and bug tracking are presented as part of the platform for software development teams, which is exactly what you need if you want QA to be visible in the schedule rather than invisible until it is late.

Cross-team dependency clusters (project scheduling risk that is usually invisible in Jira tickets)

A story can look “independent” in an issue tracker but still depend on:

- a platform capability release

- an API contract change

- a shared service migration

- security sign-off

The failure mode is not a single dependency. It is a cluster: multiple teams unknowingly colliding on the same shared bottleneck.

How to model it:

- build dependency graphs across teams and epics

- identify shared-service congestion in advance

- flag “critical path” work that is cross-functional, not just within one squad

How Playbook supports the workflow:

- multi-team dependency tracking is explicitly called out for development teams to visualize blockers and handoffs across squads.

Approval and governance drift (the unmodeled schedule gate)

Many SaaS teams forget to schedule approvals with the same discipline as coding tasks:

- product sign-off

- security review

- compliance validation

- stakeholder approvals for scope changes

If approvals are not modeled as real dependencies with lead times, sprint timelines become optimistic fiction.

How to model it:

- add explicit approval tasks with owners and due dates

- track approval cycle time as you would track QA cycle time

- model “approval risk” based on historical patterns (late approvals, unclear acceptance criteria)

How Playbook supports the workflow:

- the platform highlights built-in approval workflows and change management as core mechanisms for reducing bottlenecks and speeding decisions, and it positions AI-driven red-flag detection for schedule bottlenecks and capacity conflicts.

How to apply AI sprint planning in practice

Here is a pragmatic, step-by-step way to implement AI-enhanced sprint planning (without turning it into a science project). This is written for a SaaS team that already ships regularly and wants better predictability, workload balancing, and risk-adjusted commitments.

Step one: define “done” as a schedule state, not a JIRA column

For sprint predictability, “done” must mean:

- merged

- validated (automated and/or manual QA)

- approved (if required)

- ready for release or deployed (depending on your release model)

This matters because AI sprint planning is only as good as the state transitions it can see.

Step two: instrument the real flow of work

Minimum signals to capture:

- throughput history (what got finished per sprint)

- carryover (what routinely spills)

- PR review latency and queue size

- QA cycle time and regression load

- dependency handoffs between teams

Playbook’s positioning for software teams centers on tying work to version control and pipeline signals, plus cross-project reporting for bottlenecks and delivery performance.

Step three: forecast capacity as “available execution hours,” not “headcount”

Instead of “8 engineers equals 8 engineer-weeks,” subtract:

- interrupts (support, incidents)

- review duties

- scheduled meetings

- onboarding and mentoring time

This is the basis for realistic resource allocation.

Step four: run a pre-commit risk check (spillover probability)

A simple risk pass can look like:

- Are reviewers projected to be overloaded mid-sprint?

- Is QA projected to saturate near sprint end?

- Are there dependency clusters with uncertain readiness?

- Are approvals scheduled with owners and lead time?

If the answer is “yes” to any of the above, treat scope as variable. Commit to what can succeed.

Step five: make adjustment options explicit

When risk is high, you need knobs you can turn:

- reduce scope (remove the least critical story)

- split oversized stories

- rebalance reviewers

- shift QA earlier and reduce late batching

- pull approvals forward

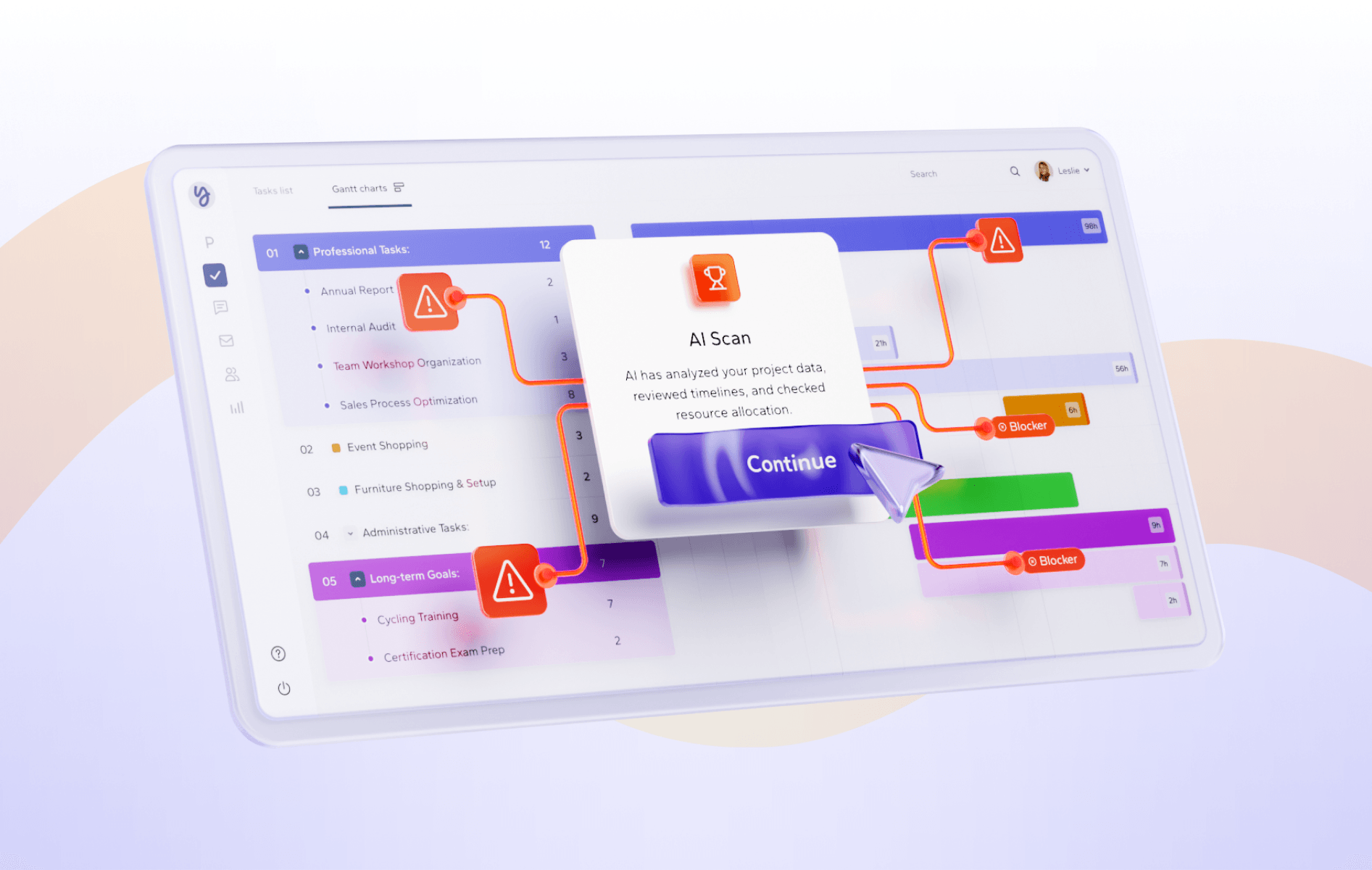

This is where an execution platform helps. Playbook also offers autonomous agents designed to detect red flags, sequence dependencies, route approvals, and push the right notifications when work stalls, which aligns with the “knobs” above.

Illustrative example (risk-adjusted sprint planning)

A mid-size SaaS org plans a two-week sprint with a full scope based on historical velocity.

An AI sprint planning pass flags:

- high dependency density around a shared API change

- review queue risk (too many parallel PRs for a small reviewer pool)

- QA regression load spiking due to recent platform changes

The team chooses one adjustment: descope one non-critical story and pull one approval earlier in the week.

Outcome: fewer end-of-sprint surprises, and a sprint that finishes “done” rather than “mostly done.”

Where Playbook fits if you want predictability, not just “more AI”

Playbook’s core promise is not that AI writes your code. It is that projects become smarter over time via scheduling automation and organizational memory. The homepage messaging emphasizes an “Organizational Memory Engine” where completed projects compound into reusable knowledge, plus AI-powered scheduling that can build and adapt schedules as new information arrives.

For software teams specifically, Playbook positions itself as an execution platform that unifies sprint management, dependencies, QA workflows, and real-time communication, with integrations to tools like GitHub and GitLab.

So the conversion argument is straightforward:

- If your bottleneck is coordination, not coding, you need scheduling and execution intelligence.

- If you want delivery predictability, you need your sprint plan to reflect constraints like review, QA, and approvals.

- If you want teams to learn, you need the system to retain what happened and reuse it.

Playbook also offers a 7-day free trial and supports booking a demo, which makes it easy to validate fit with your workflow before committing.

FAQ

What is AI sprint planning?

AI sprint planning uses historical delivery data to forecast capacity, detect dependency risk, and estimate spillover probability before the sprint starts.

Why are sprints slipping more often even with AI coding tools?

Because faster code creation does not remove constraints like review bandwidth, QA capacity, shared dependencies, and approvals.

What should I measure first if I want sprint predictability?

Start with carryover rate (spillover), review latency, QA cycle time, and dependency blockers. These indicate where your schedule is lying to you.

Can this help with release forecasting and timeline optimization?

Yes, because releases slip for the same reasons sprints slip: constraints and dependencies that were not modeled early enough.

Key takeaways

- AI makes coding faster, but it can increase scheduling noise and shift the bottleneck to review, QA, and approvals.

- Sprint planning improves when it becomes probability-based and risk-aware, not only velocity-based.

- Treat code review, QA, and governance as scheduled constraints with owners and lead times.

- Use execution data (PRs, QA signals, dependencies) to drive workload balancing and realistic sprint commitments.

- Tools that build organizational intelligence over time can compound predictability, not just output.